SLAM (Simultaneous Localization and Mapping) system with Four RGB-D cameras

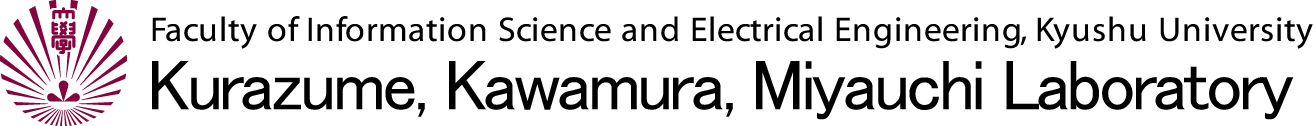

A SLAM (Simultaneous Localization and Mapping) system utilizing four RGB-D cameras (Microsoft Kinect) has been developed to capture 3D environmental structures in real time as the robot moves. For accurate localization, the system employs Collaborative Probabilistic SLAM (CPS-SLAM), in which the robot's position is estimated by a parent robot using a total station (laser range finder).

| SLAM using four kinect cameras |  System configuration

System configuration |

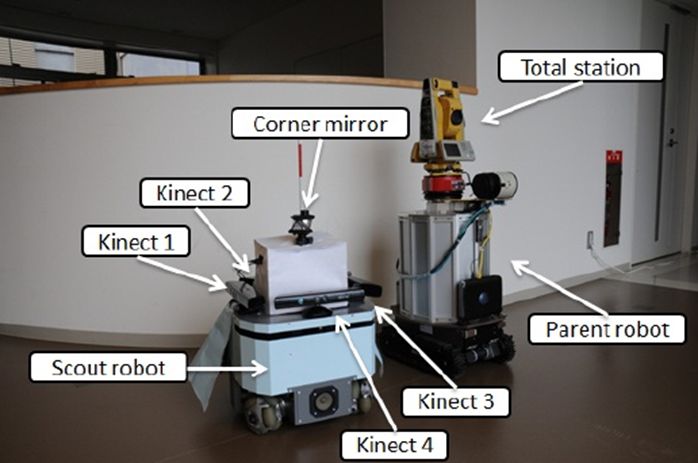

Measurement results

Measurement results |

Papers

- R. Kurazume, K. Yoneda, and S. Hirose, Feedforward and feedback dynamic trot gait control for quadruped walking vehicle, Autonomous Robots, Vol. 12, No. 2, pp.157-172, 2002.

- 鄭 龍振, 大石 修士, 倉爪 亮, 岩下 友美, 長谷川 勉, 広域レーザ計測地図とRGB-Dカメラを用いた移動ロボットの大域的3次元位置同定画像の認識理解シンポジウム (MIRU2012), IS2-77, 2012.8.7

- 鄭 龍振, 大石 修士, 倉爪 亮, 長谷川 勉, RGB-Dセンサと3 次元地図を用いたXORボックセルマッチングによる位置同定, 日本機械学会ロボティクスメカトロニクス講演会2012, 2A2-I10, (2012.5.27~29)

- 鄭 龍振, 石橋 正教, 倉爪 亮, 岩下 友美, 長谷川 勉, 4台のKinect を搭載した全方向計測ロボットによる環境計測, 第29回日本ロボット学会学術講演会, 1O3-4, 2011.9.7

Fast 3D localization for mobile robot using Normal Distributions Transform

We propose an efficient 3D global localization and tracking method for mobile robots operating in large-scale environments, using 3D geometric maps and RGB-D cameras. With the rapid advancement of high-resolution 3D range sensors, the need for high-speed processing of large-scale 3D data has become a critical challenge in robotic applications such as localization. To address this issue, the proposed method employs a Normal Distributions (ND) voxel representation. First, a 3D geometric map represented by point clouds is converted into multiple ND voxels, from which local features are extracted and stored as an environmental map. Similarly, range data captured by an RGB-D camera is also transformed into ND voxels, and corresponding local features are computed. For global localization and tracking, the similarity between ND voxels from the environmental map and those from the sensory data is evaluated using either local feature matching or the Kullback–Leibler divergence. The optimal robot pose is then estimated within a particle filter framework.

| Kinect color image | Kinect depth image | Localization process |

Papers

- 鄭 龍振, 倉爪 亮, 岩下 友美, 長谷川 勉, 大規模な3次元環境地図とRGB-Dカメラを用いた移動ロボットの広域位置同定, 日本ロボット学会誌, Vol.31, No.10, pp.896-906, 2013 (2014年度日本ロボット学会論文賞)

- Shuji Oishi, Yongjin Jeong, Ryo Kurazume, Yumi Iwashita and Tsutomu Hasegawa, ND voxel localization using large-scale 3D environmental map and RGB-D camera, 2013 IEEE International Conference on Robotics and Biomimetics (ROBIO), pp.538-545, Shenzhen, Dec. 12-14, 2013 (Best Paper Award Finalist)

- 鄭 龍振, 大石 修士, 倉爪 亮, 岩下 友美, 長谷川 勉, 広域レーザ計測地図とRGB-Dカメラを用いた移動ロボットの大域的3次元位置同定, 画像の認識理解シンポジウム (MIRU2012), IS2-77, 2012.8.7

- 鄭 龍振, 大石 修士, 倉爪 亮, 長谷川 勉, RGB-Dセンサと3 次元地図を用いたXORボックセルマッチングによる位置同定, 日本機械学会ロボティクスメカトロニクス講演会2012, 2A2-I10, (2012.5.27~29)

Quadcopter Helicopter

We are developing quadcopters and helicopters for use in land surveying applications.

| Helicopter | Helicopter | Helicopter |

Quadcopter

Quadcopter |

Quadcopter

Quadcopter |

Laser scanning from quadcopter |

Papers

Spatial change detection using voxel classification by normal distributions transform

Detecting spatial changes in the environment surrounding a robot is critical for various robotic applications, including search and rescue, security, and surveillance. This paper presents a fast spatial change detection method for mobile robots, which utilizes an on-board RGB-D or stereo camera in conjunction with a high-precision 3D map generated by a laser scanner. In the proposed method, both the map and real-time sensor data are converted into grid-based representations—Normal Distributions (ND) voxels—using normal distribution transformation. These ND voxels are then classified into three categories based on their characteristics. The map and sensor data are compared using these categories and the statistical features of the ND voxels. To further enhance robustness, overlapping and voting techniques are introduced. The proposed real-time localization and spatial change detection methods were validated through experiments conducted in both indoor and outdoor environments using a mobile robot equipped with real-time range sensors.

Mobile robot with Kinect

Mobile robot with Kinect |

Detected differences | ICRA 2019 Video |

Papers

- Ukyou Katsura, Kohei Matsumoto, Akihiro Kawamura, Tomohide Ishigami, Tsukasa Okada, Ryo Kurazume,Spatial change detection using voxel classification by normal distribution transformation, IEEE International Conference on Robotics and Automation 2019 (ICRA 2019), pp.2953-2959, Montreal, Canada, 2019.5.20-24, 2019

- 桂 右京, 松本 耕平, 河村 晃宏, 倉爪 亮, 石上 智英, 岡田 典, 距離データに対するNDTを用いた高速な差分検出手法の提案 -屋内・屋外環境での差分検出精度の検証-, 日本機械学会ロボティクスメカトロニクス講演会2019, 2A1-R08, 2019.6.5-8

- 桂 右京, 倉爪 亮, 石上 智英, 岡田 典, 距離データに対するNDTを用いた高速な差分検出手法の提案, 第36回日本ロボット学会学術講演会, 3J3-01, 2018.9.5-7

- 桂 右京, 倉爪 亮, 石上 智英, 岡田 典, 距離データに対するNDTを用いた高速な差分検出手法の提案, 日本機械学会ロボティクスメカトロニクス講演会2017, pp.2A2-O08, 2017.5.10-13

Tour guide robot and personal mobility vehicle

We are developing a tour guide robot and a personal mobility vehicle that utilize not only their onboard sensors but also external sensors installed along streets.

Papers

- Kohei Matsumoto, Hiroyuki Yamada, Masato Imai, Akihiro Kawamura, Yasuhiro Kawauchi, Tamaki Nakamura, Ryo Kurazume, Development of a Tour Guide and Co-experience Robot System using the Quasi-Zenith Satellite System and the 5th-Generation Mobile Communication System at a Theme Park, ROBOMECH Journal, Vol.8, No.4, 2021, DOI:10.1186/s40648-021-00192-7

- Kohei Matsumoto, Hiroyuki Yamada, Masato Imai, Akihiro Kawamura, Yasuhiro Kawauchi, Tamaki Nakamura, Ryo Kurazume, Quasi-Zenith Satellite System-based Tour Guide Robot at a Theme Park, 2020 IEEE/SICE International Symposium on System Integration (SII), pp. 1212-1217, doi: 10.1109/SII46433.2020.9025964, Honolulu, Hawaii, USA, Hawai, USA, 2020.1.12-15, 2020

- Hiroyuki Yamada, Tomoki Hiramatsu, Imai Masato, Akihiro Kawamura, Ryo Kurazume, Sensor terminal "Portable" for intelligent navigation of personal mobility robots in informationally structured environment, 2019 IEEE/SICE International Symposium on System Integrations (SII), Paris, 2019.1.14-16, 2019

- 松本 耕平, 今井 将人, 山田 弘幸, 河村 晃宏, 川内 康裕, 中村 珠幾, 倉爪 亮, 準天頂衛星測位システムを用いたテーマパークにおける案内ロボットシステムの開発, 日本機械学会ロボティクスメカトロニクス講演会2019, 1P1-R10, 2019.6.5-8

- 松本 耕平, 今井 将人, 山田 弘幸, 河村 晃宏, 倉爪 亮, テーマパークにおける自律案内ロボットの開発, 第19回計測自動制御学会システムインテグレーション部門講演会 SI2018, pp.2051-2054, 2018.12.13-15

- Kohei Matsumoto, Masato Imai, Hiroyuki Yamada, Akihiro Kawamura and Ryo Kurazume, Development of Autonomous Tour-Guide Robot System in a Theme Park, Proc. The 14th Joint Workshop on Machine Perception and Robotics (MPR18), PS4-7, Fukuoka, 2018.10.16-17

- 今井 将人, 平松 知樹, 山田 弘幸, 河村 晃宏, 倉爪 亮, パーソナルモビリティのための情報構造化環境の構築とテーマパークでの誘導実験, 日本機械学会ロボティクスメカトロニクス講演会2018, pp.2A2-D07, 2018.6.2-5

- 平松 知樹, 今井 将人, 山田 弘幸, 河村 晃宏, 倉爪 亮, 小型センサ端末によるパーソナルモビリティ・ビークルの誘導制御システムの開発, 日本機械学会ロボティクスメカトロニクス講演会2018, pp.1A1-L10, 2018.6.2-5

Autonomous lawn-mowing robot

We are developing an autonomous lawn-mowing robot equipped with QZSS MICHIBIKI for centimeter-level positioning via CLAS (Centimeter Level Augmentation Service), and a 3D LiDAR sensor for obstacle detection. For autonomous path planning and motion control toward multiple targets, we employ the Nav2 framework in ROS2.

1st model autonomous lawn-mowing robot |

2nd model autonomous lawn-mowing robot |

Papers

Automatic illuminance measurement multiple robots

We are developing an automatic illuminance measurement multi-robot system designed to assess lighting conditions in large-scale warehouses. This system employs advanced sensors and algorithms to ensure optimal illuminance levels throughout expansive areas, thereby enhancing both energy efficiency and operational safety.

Measurement by automatic illuminance measurement multiple robots |

Automatic illuminance measurement multiple robots |

| Illuminance measurement | Illuminance measurement |

Papers

Beach cleaning robot

We are developing a beach-cleaning robot designed to collect microplastic debris. The goal is to address the widespread problem of microplastic pollution in marine environments and to help ensure cleaner, safer beaches for both humans and wildlife.

Beach cleaning robot |

Beach measurement robot |

| Beach cleaning | Beach cleaning |

Papers

- 海洋破砕プラスチックごみ回収ロボットの開発

宇野 光輝, 倉爪 亮

第23回計測自動制御学会システムインテグレーション部門講演会 SI2022, 1A2-D04, 2022.12.14-16 [pdf][bibtex] - 海洋破砕プラスチックごみ回収ロボットシステムの開発 -レーザースキャナの反射輝度によるごみ検出とロボットの誘導-

有瀬 昌矢, 松本 耕平, 倉爪 亮

第23回計測自動制御学会システムインテグレーション部門講演会 SI2022, 1A2-D11, 2022.12.14-16 [pdf][bibtex] - 海洋破砕プラスチックごみ回収機構の開発

宇野 光輝, 倉爪 亮

第22回計測自動制御学会システムインテグレーション部門講演会 SI2021, 1H4-03, 2021.12.15-17 [pdf][bibtex] - 海洋破砕プラスチックごみ回収ロボットシステムの開発 レーザスキャナの反射輝度を用いた海岸環境の識別

有瀬 昌矢, 倉爪 亮

日本機械学会ロボティクスメカトロニクス講演会2021, 1P2-G09, 2021.6.6-8

[pdf][bibtex]

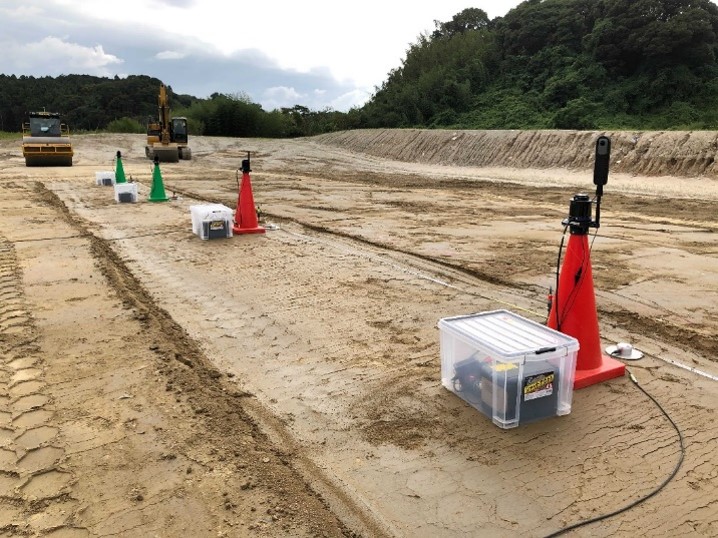

Construction robot

We are developing a retrofit-type remotely controlled backhoe, a compaction evaluation method for vibratory rollers using the multi-sensor terminal “Sensor Pod,” and a cyber-physical platform for construction sites called “ROS2-TMS for Construction.”

| A retrofit-type remotely controlled backhoe |  Compaction evaluation experiment by vibratory rollers using Sensor Pods |

Papers

- Field Implementation of an Automated Hydraulic Excavator Using ROS2-TMS for Construction and OPERA

Tomoya Kouno, Yuichiro Kasahara, Kota Akinari, Akinosuke Tsutsumi, Takayoshi Hachijo, Shunsuke Kimura, Yutaro Fikase, Yuki Miyashita, Takashi Yokoshima, Taro Abe, Daisuke Endo, Takeshi Hashimoto, Keiji Nagatani, Genki Yamauchi, Ryo Kurazume

2026 IEEE/SICE International Symposium on System Integration (SII), pp.-, doi:, 2026.1.11-14, Cancun, Mexico, 2026

[pdf][bibtex] - 3D Measurement System for Soil Loading by an Autonomous Backhoe using OPERA

Takayoshi Hachijo, Yutaro Fukase, Takashi Yokoshima, Yuki Miyashita, Shunsuke Kimura, Masanori Suzuki, Yuichiro Kasahara, Tomoya Kouno, Koshi Shibata, Ryo Kurazume, Daisuke Endo, Genki Yamauchi, Takeshi Hashimoto

42nd International Symposium on Automation and Robotics in Construction (ISARC 2025), pp.1501-1506, doi:10.22260/ISARC2025/0195, 2025.7.28-31, 2025

[pdf][bibtex] - Sensor Pods and ROS2-TMS for Construction for Cyber-Physical System at Earthwork Sites

Ryuichi Maeda, Tomoya Kouno, Kohei Matsumoto, Yuichiro Kasahara, Tomoya Itsuka, Kazuto Nakashima, Yusuke Tamaishi and Ryo Kurazume

IEEE International Symposium on Safety, Security, and Rescue Robotics (SSRR), New York, 2024.11.12-14, pp.58-63, 2024, doi: 10.1109/SSRR62954.2024.10770031

[pdf][bibtex] - Task management system for construction machinery using the open platform OPERA

Yuichiro Kasahara, Tomoya Itsuka, Koshi Shibata, Tomoya Kouno, Ryuichi Maeda, Kohei Matsumoto, Shunsuke Kimura, Yutaro Fukase, Takashi Yokoshima, Genki Yamauchi, Daisuke Endo, Takeshi Hashimoto, and Ryo Kurazume

2024 IEEE International Conference on Robot & Human Interactive Communication (RO-MAN), Pasadena, 2024.8.26-30, pp.1929-1936, 2024, doi:10.1109/RO-MAN60168.2024.10731421

[pdf][bibtex] - Development of a Retrofit Backhoe Teleoperation System Using Cat Command

Koshi Shibata, Yuki Nishiura, Yusuke Tamaishi, Kohei Matsumoto, Kazuto Nakashima, Ryo Kurazume

2024 IEEE/SICE International Symposium on System Integration (SII), pp.1486-1491, doi:10.1109/SII58957.2024.10417625, 2024.1.8-11, Ha Long, Vietnam, 2024

[pdf][bibtex] - Evaluation of ground stiffness using multiple accelerometers on the ground during compaction by vibratory rollers

Yusuke Tamaishi, Kentaro Fukuda, Kazuto Nakashima, Ryuichi Maeda, Kohei Matsumoto, Ryo Kurazume

40th International Symposium on Automation and Robotics in Construction (ISARC 2023), pp. ,doi:, Chennai, 2023.7.4-7, 2023

[pdf][bibtex]